Most people approach AI visuals with the wrong mental model. They think the process begins with a prompt, as if the best results always come from typing a clever sentence into a blank box. That works for experimentation, but it is rarely the most reliable path when the goal is to create something usable. That is why Image to image feels more relevant than many prompt-first workflows: it starts with an existing visual asset and builds from there.

That difference sounds small, but it changes everything. When the starting point is a real image instead of an empty canvas, the process becomes easier to guide, easier to evaluate, and often easier to repeat. In practice, that makes the workflow more aligned with how creators, marketers, designers, and founders actually work. They usually do not need endless novelty. They need a stronger version of something they already have.

Why Blank Canvas Logic Often Breaks Down

Toimage AI is at its most impressive when it surprises you. It is less impressive when you need consistency.

A blank-canvas workflow asks the model to decide too many things at once. It has to infer composition, subject identity, framing, lighting, visual tone, and stylistic direction from text alone. Even strong prompts leave room for drift. The result may look polished, but it can still miss the actual creative objective.

That is why text-only generation often feels unstable in production settings. It can produce beautiful outputs, yet still fail the more important test: does this serve the original idea?

A Source Image Narrows The Guesswork

Once a source image enters the workflow, the system no longer has to imagine the entire starting point. It can read visible structure, proportions, mood, placement, and subject cues directly from the input. That does not remove uncertainty entirely, but it reduces a lot of unnecessary guessing.

Control Comes From Shared Visual Ground

This is the real shift. The user and the model are now working from the same visual reference. That shared ground makes the output easier to steer and easier to judge.

How The Public Workflow Is Structured

From the publicly visible image workspace, the process is designed around a few clear decisions rather than a long technical chain. That is one reason the product feels approachable.

The Platform Begins With Model Choice

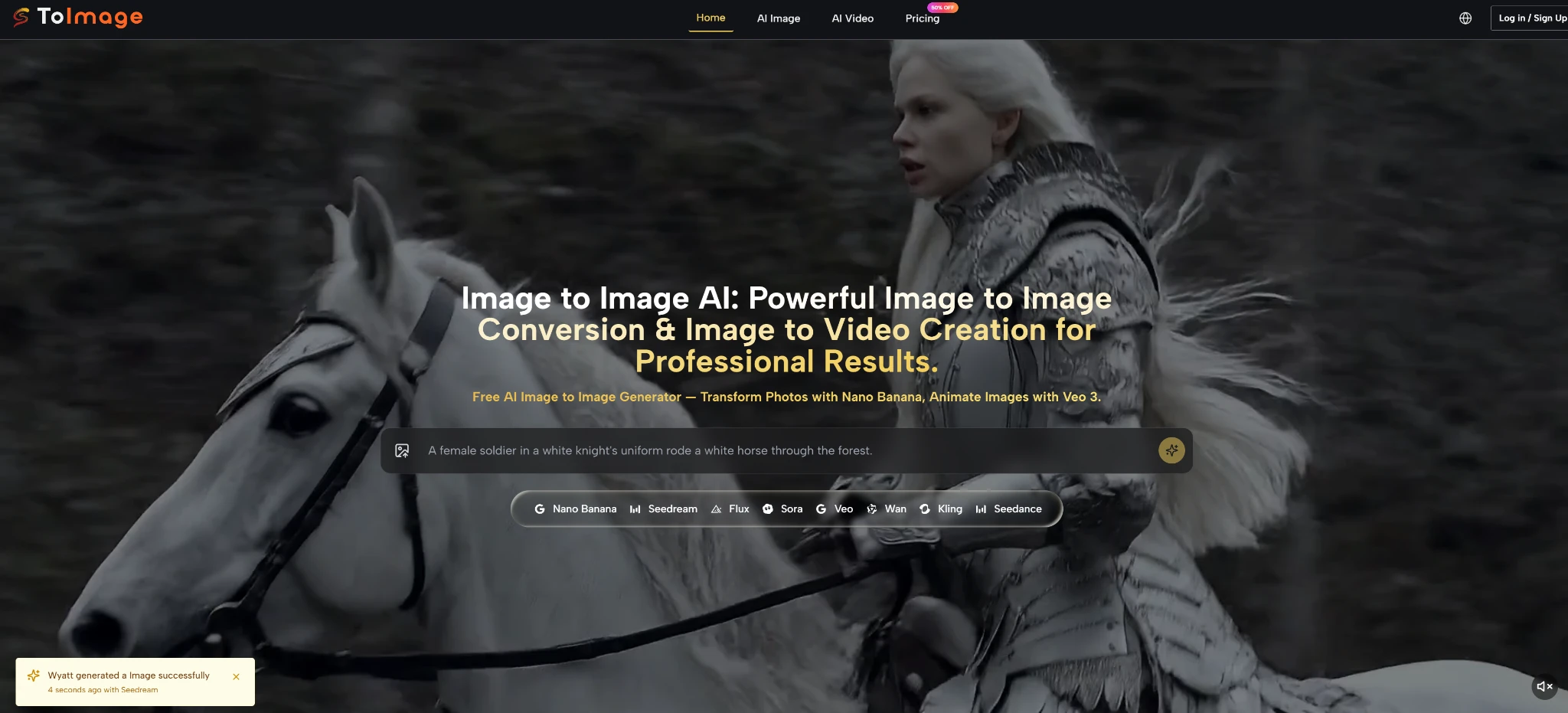

Instead of hiding the engine behind one default experience, the workspace surfaces multiple image models. Publicly visible options include Nano Banana, Qwen, Seedream, Flux, Midjourney, and GPT-4o. This suggests the platform is built around model selection as part of the creative workflow, not as an afterthought.

The Core Input Can Be Text Or An Existing Image

The image product publicly presents two routes. One is text to image, where users generate from a description alone. The other is image to image, where users upload a photo and describe the transformation they want. That combination matters because it gives users two different entry points depending on whether they are exploring from zero or refining from an asset.

The Process Encourages Iteration Rather Than One Shot Perfection

Even at the level of public product structure, the logic is clear: choose a model, provide direction, generate, then compare. This is not only about making one image. It is about moving through variations with intent.

The Interface Implies A Practical Rhythm

That rhythm matters more than it seems. A lot of AI products are built for spectacle. This one, at least from the visible workflow, looks more built for repeated use.

How To Think About The Model Layer

A common mistake is treating all AI image models as interchangeable. The public descriptions on this platform suggest the opposite.

Nano Banana Looks Best For Reference Heavy Work

Nano Banana is publicly positioned around realism and image-to-image transformation. The platform also states that it can work with up to four reference images. That makes it particularly relevant for tasks where continuity matters, such as keeping a subject recognizable or blending several visual cues into one consistent output.

Seedream Feels Better For Faster Exploration

Seedream is described in a way that suggests speed and flexibility. That makes it easier to picture as a strong option for early-stage experimentation, where the user wants to test multiple directions quickly before committing to a more polished pass.

Flux Appears More Editing Oriented

Flux is framed around more precise visual control. That is important because not every task is about dramatic transformation. Sometimes the goal is to preserve most of the source image while adjusting certain elements with more care.

Different Models Support Different Creative Temperatures

Some tasks need bold reinterpretation. Others need restraint. A multi-model setup is useful because it lets users choose the temperature of change rather than forcing one visual behavior onto every project.

Where This Workflow Becomes Genuinely Useful

The strongest case for image-to-image is not theoretical. It becomes obvious when you look at ordinary creative tasks.

A founder with rough product photos may need cleaner marketing visuals. A content creator may want several looks from one strong portrait. A designer may want to push a draft into a more finished direction. A brand team may want to test alternative campaign moods while preserving a recognizable base.

These are not fringe use cases. They are standard visual problems.

It Helps Preserve What Already Works

If a source image already contains the right angle, silhouette, or emotional tone, rebuilding from scratch can waste time. Image-to-image lets the user carry forward the part that already works.

It Makes Variation Less Expensive Mentally

The creative strain of re-prompting from zero is real. With a source image in place, each new attempt feels like a revision, not a reinvention. That is usually easier to manage and easier to evaluate.

It Supports Repeatable Output Better

When several images need to feel related, reference-driven workflows have a natural advantage. Even before talking about advanced consistency techniques, the simple fact of beginning from a shared source helps outputs feel more connected.

A More Useful Way To Evaluate Quality

People often judge AI image tools by asking whether the output looks impressive. That is too shallow.

A better question is whether the output is useful for the task. In that sense, image-to-image changes the standard of success.

Good Results Are Not Only About Visual Beauty

An image can look attractive and still fail because it changes too much, loses the product identity, or drifts away from the intended style. In applied work, those failures matter more than surface polish.

Reference Based Workflows Improve Evaluation

Because there is a visible before-and-after relationship, the user can judge whether the transformation is actually successful. That makes quality assessment more concrete.

| Evaluation Point | Text First Workflow | Image To Image Workflow |

| Starting context | Built from words alone | Built from a real visual source |

| Control over structure | Lower at the start | Stronger from the first pass |

| Ease of comparison | Harder to benchmark | Easier against the source image |

| Consistency across outputs | More prompt dependent | More reference dependent |

| Best use case | Exploration from nothing | Refinement from something real |

This is why image-to-image often feels more professional, even when both methods use strong models underneath.

How To Use The Workflow More Intelligently

The public flow is simple, but the quality of results still depends on how the user thinks.

Start With A Clear Visual Asset

A better source image gives the model better material. If the original subject, composition, or lighting is legible, the transformation usually becomes easier to guide.

Choose The Model Based On The Job

Do not choose a model because it sounds fashionable. Choose it because the task needs either realism, speed, or more controlled editing behavior.

Write For Change, Not For Reinvention

In image-to-image workflows, the prompt works best when it clearly defines what should change. The source image already carries part of the meaning. The text should push direction, not repeat everything the image already shows.

Generate In Rounds, Not In Hope

One pass for direction. One pass for refinement. One pass for stronger finish. This pattern is more reliable than expecting perfection from a single attempt.

What Keeps The Workflow Credible

A good AI product becomes more trustworthy when its promise matches its likely use. Here, the public product direction feels grounded because it is tied to understandable jobs: visual transformation, reference-based creation, model choice, and iterative output.

That does not mean the workflow is effortless. Results still depend on prompt clarity, source quality, and model selection. Some attempts will still miss. Some tasks will still need multiple generations. In my view, that honesty makes the workflow easier to believe in, not less compelling.

Why Asset Based Creation Will Matter More

As AI visual tools mature, the most important shift may not be toward bigger claims. It may be toward better starting points.

That is why image-to-image feels strategically important. It replaces the fantasy of perfect prompting with a more grounded idea: begin with an asset, then improve it intelligently. That approach matches how visual work usually happens in the real world. People revise, upgrade, restyle, and adapt. They do not always want to create from nothing.

Seen from that angle, the value of this workflow is not just that it can produce attractive images. It is that it offers a more stable bridge between intent and output. And in practical creative work, that bridge is often worth more than novelty alone.